Some training scenarios are tough to drill properly, and it has nothing to do with the quality of instructors or the curriculum on paper. The problem sits inside the scenarios themselves. Certain situations simply refuse to be staged safely, repeated often enough, or pushed to the cognitive load that real operations demand. The five outlined here all live in that awkward space — and they also happen to be the scenarios where personnel competence carries the biggest operational weight. That combination turns the training gap into a readiness problem worth taking seriously.

Below, we walk through each scenario, explain why conventional methods keep coming up short, and lay out what VR actually changes in practice.

1. Active Shooter Response

Few situations demand the same blend of split-second decision making, controlled movement, and accurate threat suppression as an active shooter incident. The cognitive load is brutal, and that is exactly what traditional drills have a hard time reproducing.

Where conventional training falls flat. A live exercise inside a controlled facility — role-players, marked corridors, supervising instructors — covers the procedure neatly. Entry, formation, threat ID, suppression. The procedure gets rehearsed. What rarely gets rehearsed is the chaos. Real scenes come loaded with sirens, civilians sprinting in unpredictable directions, gunfire echoing off walls in ways that confuse the ear, ambiguous threats that don’t announce themselves. Staging that level of sensory overload in a controlled facility is risky for the trainees and the role-players alike, so most agencies water it down by default.

Then there’s frequency. Coordinating a full live drill needs a building, role-players, instructors, and a clear schedule. Most agencies pull it off once a quarter if they’re lucky. Active shooter response, more than nearly any other tactical discipline, depends on pattern recognition built through repetition. Quarterly is not enough to make a response reflexive.

What VR does differently. VR puts trainees inside the full sensory storm — smoke clouding sight lines, civilian NPCs panicking and bolting through doorways, gunfire coming from positions trainees actually have to locate by sound and visual cue. The procedure runs the same way it does in live training, but the cognitive conditions surrounding it match real incidents far more closely.

The repetition payoff is the part that often gets underestimated. One trainee can complete twenty active shooter runs in the time a single live drill takes to organize. Multiply that across a training cycle and the gap between VR-trained and conventionally-trained personnel becomes substantial.

There is also room to fail. In live drills, instructors step in to correct mistakes before anyone gets hurt. In VR, the trainee can choose the wrong room, fire on the wrong silhouette, or miss a communication beat — and then watch the consequence play out. Seeing what goes wrong, in context, is one of the most reliable ways to teach what right looks like.

2. Hostage Rescue Operations

Hostage rescue is the classic case of an operation that almost never happens but matters enormously when it does. Training for it through conventional methods runs into structural problems quickly.

Where conventional training falls flat. Putting together a live hostage rescue drill is expensive. You need hostile role-players, hostage role-players, environments that resemble likely operational settings, and enough safety oversight to run tactical entries without anyone getting hurt. The cost limits how many scenarios a unit can run, and the variety stays narrow. Most units rehearse a handful of variations across an entire training cycle.

The deeper issue is that hostage rescue is more about judgment than choreography. Reading the body language of a hostile actor. Choosing the moment of entry. Knowing when to keep negotiating and when to commit to force. Managing the seconds after entry, when everyone in the room is moving at once. Conventional training nails down the choreography. The judgment layer — the part that actually decides whether the operation succeeds — rarely gets enough reps to mature.

Specialized units also need rehearsal that conventional facilities can’t deliver. Specific building layouts, specific hostile profiles, specific hostage configurations. None of that gets staged on demand at a standard training site, which means operators arrive on real missions without having rehearsed the conditions they’re about to face.

What VR does differently. Variety and frequency both jump. A VR system can run hostage scenarios across different building types, different hostile dispositions, different hostage placements, and different time pressures, one after another. Trainees rehearse the entire arc — assessment, approach, entry, threat neutralization, hostage recovery, post-incident handling — across scenarios that would be impractical to mount live.

For specialized units, the mission-rehearsal use case is where VR really earns its place. Known building schematics can be loaded into the simulation. Suspect profiles can be modeled with realistic behavior. Personnel rehearse the actual mission before going operational, surfacing coordination gaps and stress-testing contingencies in advance. This single application has produced some of the clearest operational payoffs VR has delivered in defense and security work to date.

Judgment reps also accumulate. Trainees practice the decision points — negotiate, enter, hold, escalate — across enough scenarios that the right reads start becoming intuitive. Conventional training cannot match that volume.

3. Mental Health Crisis Intervention

Mental health response is now a fixture in modern law enforcement training requirements. It is also one of the hardest disciplines to replicate using classroom or role-play methods.

Where conventional training falls flat. Classroom role-play covers the de-escalation framework — the right questions, the right tone, the right body language. What it misses is the cognitive demand of a live encounter. A person in crisis doesn’t follow a script. Their behavior shifts on a dime. Verbal cues are subtle and easy to misread. Physical proximity changes the dynamic in ways that are hard to predict. Add a few bystanders, conflicting information from family members, and a radio crackling about another call holding, and the encounter becomes a perceptual puzzle that classroom rehearsal simply cannot reproduce.

Role-play also has a credibility problem. When a colleague pretends to be in crisis, both sides know it’s pretend. The cognitive condition that matters most — reading another human’s state under genuine uncertainty — does not engage the way it does in a real call.

There is one more issue: repeated role-play of mental health scenarios can desensitize officers over time, which is bad for everyone involved. Conventional training has limited ways to manage that risk.

What VR does differently. AI-driven NPCs can produce verbal and behavioral patterns that look and sound like someone actually in crisis — without the role-play credibility issue. NPCs respond to officer behavior in real time, with micro-expressions, tone shifts, and movement that engage the perceptual muscles the encounter actually requires. The trainee is reading a simulated person, not a colleague who’s about to break character and laugh.

Variety scales as well. VR can run encounters featuring different crisis types — suicidal ideation, psychotic episode, substance-induced agitation, acute panic — across different settings and bystander dynamics. Officers build up a perceptual range that a few classroom sessions cannot deliver.

The trauma-informed design layer is also worth flagging. Well-built VR scenarios include pre-scenario briefings, voluntary pause options, and structured debriefs in ways that role-play often skips. For sensitive scenario categories, this is not a nice-to-have. It is part of how serious programs protect officers from the psychological cost of repeated exposure.

4. Urban Warfare and Built-Up Area Operations

Urban combat sits at the intersection of every kind of operational complexity. It is also the scenario family where conventional training facilities struggle hardest to keep up.

Where conventional training falls flat. Purpose-built urban warfare facilities — CQB houses, MOUT sites — exist throughout most national defense systems. They do the job for what they are. They are also expensive, geographically fixed, and limited in layout variety. A unit trains on whatever it has access to, against whatever threat configurations the site supports. Period.

Real urban operations vary wildly. Building heights, floor plans, civilian density, traffic patterns, line-of-sight conditions, communication infrastructure — every operational area shifts the picture. Personnel who train repeatedly on a single CQB layout grow comfortable with that layout, not with urban warfare generally.

Combined arms in urban environments is harder still. Vehicle-infantry coordination, air support integration, dismounted movement across multi-story buildings, casualty evacuation through contested terrain — staging this live demands multiple units, complex coordination, and a heap of safety overhead. Most organizations run a full combined-arms urban exercise annually at best.

What VR does differently. Urban environments can be rendered at whatever scale, layout, and threat density the scenario requires. Different cities. Different building stocks. Different threat dispositions. Different civilian densities. Trainees train across a range of urban variety that no fixed facility can match.

Combined arms work becomes practical to drill at higher frequency. Squad-level operations with vehicle support, aerial coordination, and multi-element maneuver run inside simulation without the live-exercise overhead. Communications procedures, command coordination, and unit tactics all get reps in the conditions that actually matter.

Mission-specific urban rehearsal also fits here. For operations targeting known locations, VR can render the actual operational environment using available terrain and structural data. Operators rehearse the exact target, identifying chokepoints and coordinating movement before stepping off. The payoff is direct and measurable, particularly for special operations communities and any unit running deliberate planning against known objectives.

5. De-escalation and Force Continuum Application

De-escalation training addresses the most common decision points an officer faces — when to use force, when to hold, when to step back, when to escalate. The consequences of getting these decisions wrong are also among the most public and politically charged in modern policing.

Where conventional training falls flat. The mechanical sequence of force application — drawing a weapon, presenting a baton, applying restraints — drills well through traditional methods. The judgment underneath does not. Deciding whether force is warranted, what level fits the situation, when to transition between levels, and how to articulate that decision afterward is a cognitive challenge, not a mechanical one. Conventional methods don’t generate the scenario variety or cognitive load needed to mature that judgment.

Role-play hits the credibility ceiling again. A colleague pretending to be uncooperative doesn’t generate the cognitive condition of a real encounter. The trainee knows the colleague won’t actually swing, won’t actually run, won’t actually pull anything from a waistband. The decision-making that matters in real life runs on uncertainty role-play cannot manufacture.

Frequency is the other constraint. Force continuum judgments need volume to become reflexive. Annual or semi-annual training does not get there. Officers walk into actual encounters with their decision patterns shaped less by deliberate training and more by departmental folklore and personal history.

What VR does differently. Force continuum scenarios run at a frequency conventional training simply cannot reach. Officers face compliance failures, escalating verbal aggression, weapon presentation by subjects, sudden flight, and ambiguous threat indicators — each scenario presenting a different combination of cues, context, and correct response.

The mechanical and judgment layers stop being separate exercises. Officers practice the procedural sequence (weapon presentation, communication, positioning) alongside the decision-making that determines whether each step is appropriate. Each side reinforces the other in ways that isolated training can’t replicate.

Performance measurement matters in this category more than most. VR generates session data on which cues officers responded to, which escalation paths they took, and where their decisions drifted from doctrine. That data fuels both individual development and program-level analysis of where officers tend to misjudge force application.

The ethical layer also gets baked in. Debriefs examine decisions not just tactically but ethically — reinforcing rules of engagement, proportionality, and the seriousness of force decisions. Designed properly, VR training doesn’t desensitize. It builds the kind of judgment that actually shows up in real encounters.

What These Five Scenarios Share

Step back from the individual cases and a clear pattern shows up. Each of these scenarios shares a handful of traits that conventional training was never built to handle well.

Cognitive load that depends on real conditions. Sirens, civilian movement, ambiguous cues, time pressure — none of that can be safely produced inside a controlled facility, because the conditions that generate the cognitive load are the same conditions that introduce real risk.

Variety conventional facilities can’t deliver. A CQB house has one floor plan. A MOUT site has one set of buildings. Real operations vary infinitely. VR varies infinitely at near-zero marginal cost.

Frequency tied to low per-session cost. Pattern recognition and reflexive judgment require reps. Conventional training is too expensive to run often enough. VR is cheap enough at the margin to enable monthly or even weekly drilling.

Failure modes that need to be safely experienced. Deliberate practice at high difficulty requires the freedom to make wrong calls and see what happens, without real-world consequence. Live training cannot offer that for high-stakes scenarios.

Performance data conventional training doesn’t generate. Telemetry across sessions reveals decision-making patterns across personnel and over time. Instructor observation is valuable, but it does not aggregate into the same kind of program-level intelligence.

None of this is meant to suggest VR should replace conventional training across the board. Weapons handling fundamentals, physical conditioning, range discipline, and equipment operation drill perfectly well through traditional methods. The five scenarios discussed here are different. They live in the gap conventional training cannot close, which is precisely where VR earns its operational keep.

KOMINA Virtual Training Capabilities

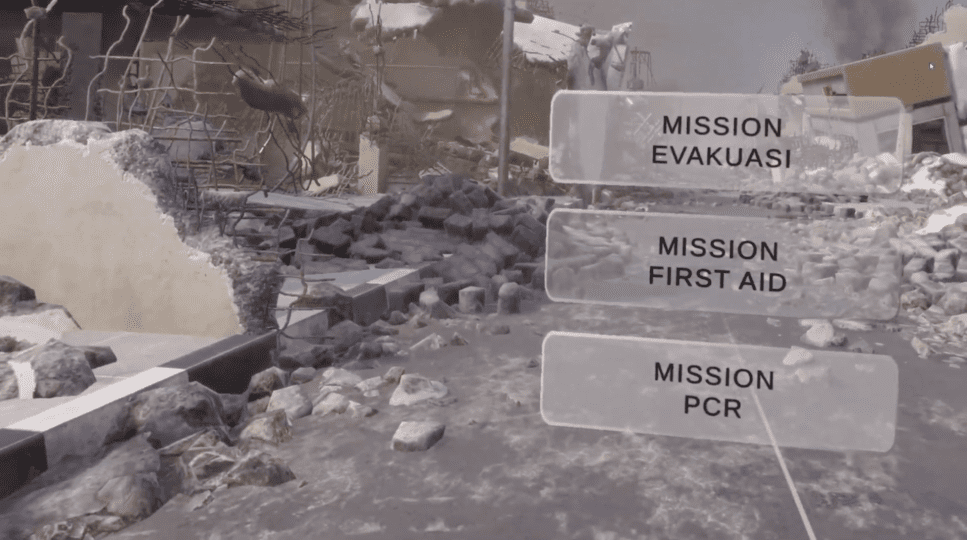

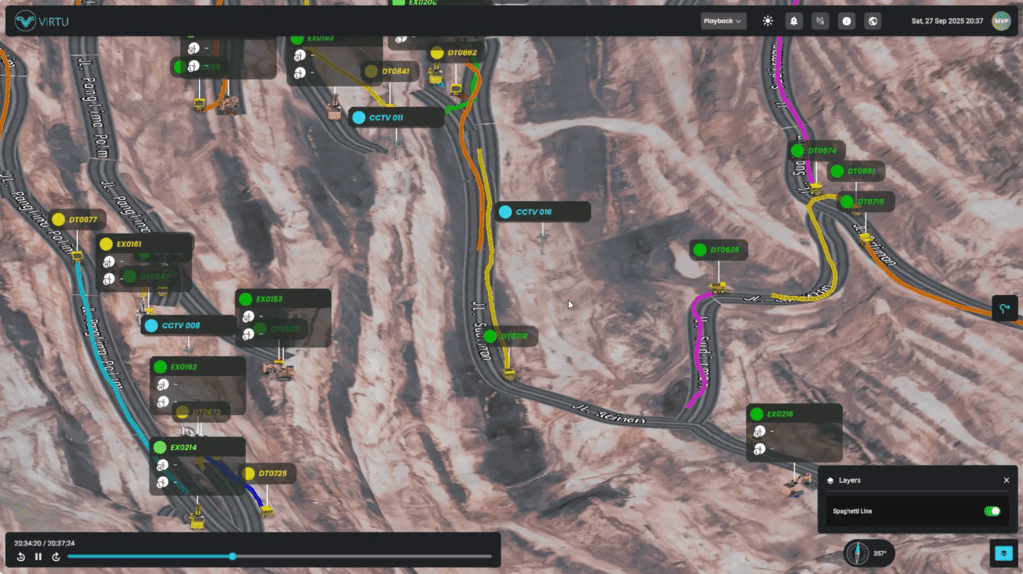

KOMINA — PT Komando Imersif Indonesia — develops virtual training systems built for the operational realities of military and law enforcement organizations. The platform is organized around scenario categories that map directly to the most operationally significant disciplines in defense and security work.

Single Combat focuses on individual weapons proficiency, marksmanship, and engagement decision-making across service weapons inventory. Trainees build fundamentals on tracked weapon-form props before live range time.

Team Combat covers small unit tactics, room clearing, coordinated movement, and pressure communications. Squad-level scenarios run in environments built to match real operational settings, including the active shooter and urban warfare scenarios discussed above.

HALO and HIHO modules handle high-altitude parachute insertion training — exit sequence, freefall management, canopy deployment, landing procedures. These scenarios offer extensive rehearsal opportunity for operations that carry serious inherent risk in live training.

Vehicular Battle addresses armored vehicle crew operations, tactical driving, convoy procedures, and response to vehicular threats. Motion platforms paired with the simulation reproduce vehicle dynamics with a level of fidelity static trainers cannot match.

Command Center covers tactical operations center procedures, situational awareness management, and multi-unit coordination. Senior personnel rehearse command and control scenarios, including hostage situations and mass casualty coordination.

Custom Projects handle operational requirements outside the standard module set. Mission-specific rehearsals, specialized scenarios including mental health crisis intervention and de-escalation training, and integration with existing training infrastructure all get scoped on a per-project basis.

The platform is built in Indonesia for the operational requirements of defense and security organizations operating in Indonesian and regional contexts. Scenarios reflect locally relevant environments, terrain, equipment, and doctrinal references. Voice prompts and UI default to Bahasa Indonesia, with English available for joint exercises and regional cooperation. Performance data is logged for unit-level review and integrates with existing training records.

For capability briefings, scenario scoping, or pilot deployments, KOMINA can be reached at https://komina.co/ or +62 812 9696 7887.